How to evaluate an AI-native ATS: 10 questions to ask before you sign

Introduction

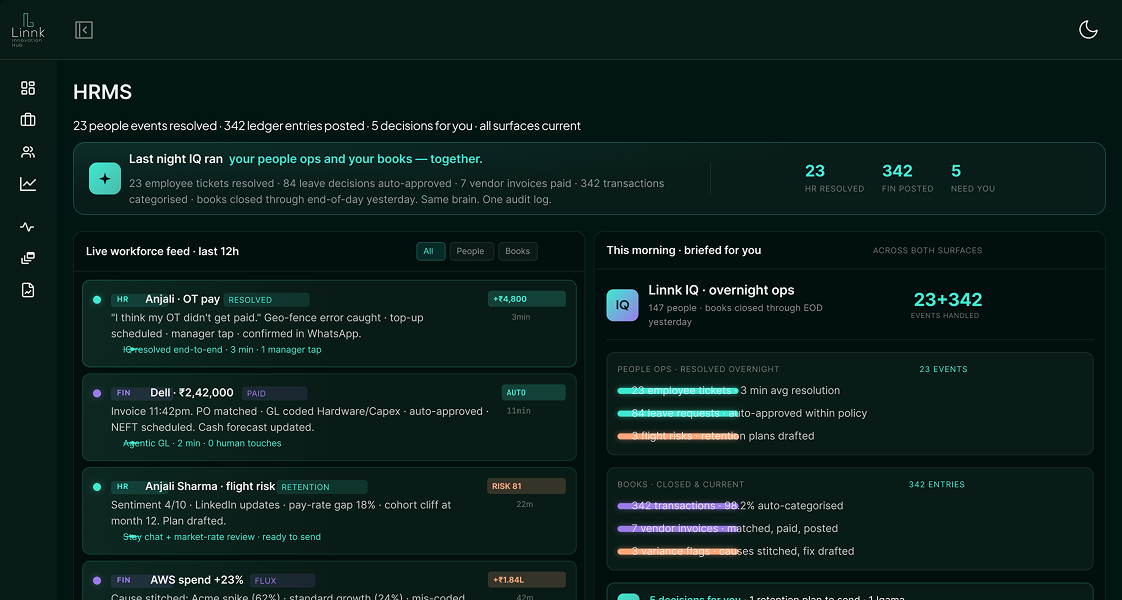

Hiring teams are under pressure to move faster, improve candidate quality, and reduce operational overhead while competing for increasingly selective talent. That pressure has fueled the rise of AI-native Applicant Tracking Systems (ATS): recruiting systems built with artificial intelligence at the core rather than bolted on as an afterthought.

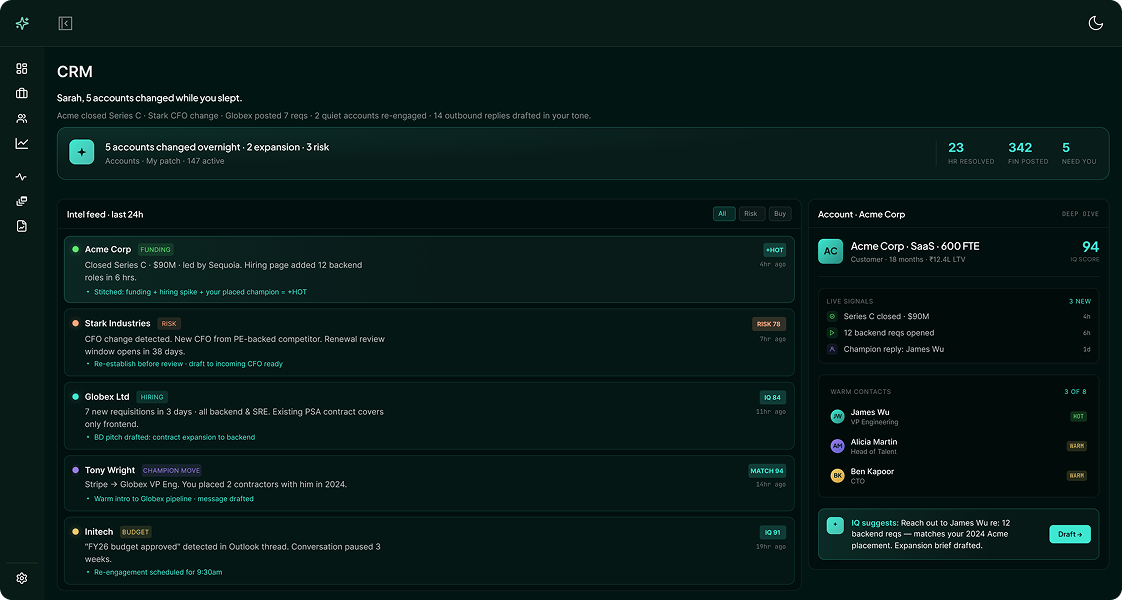

But not every platform claiming to be AI-powered is truly AI-native. Some tools simply layer basic automation on top of legacy workflows. Others use generative AI effectively but create compliance, bias, or operational risks. And many vendors promise end-to-end hiring transformation without demonstrating measurable outcomes.

Choosing the wrong ATS can lock your organization into inefficient workflows, fragmented data, and expensive migration cycles for years. Before signing a contract, recruiting leaders should conduct a rigorous evaluation process that goes beyond demos and marketing claims.

Is the AI Truly Native or Simply Added On?

One of the first things to clarify is whether the platform was designed with AI at its foundation or whether AI tools were retrofitted into a legacy system. Many vendors market automation features as artificial intelligence, even when they rely on simple rule-based workflows.

An AI-native ATS should demonstrate deep integration of machine learning and intelligent decision-making across the entire recruitment lifecycle. The AI should actively support sourcing, candidate screening, interview coordination, communication, and analytics rather than existing as a standalone feature.

How Accurate Is the Candidate Matching Engine?

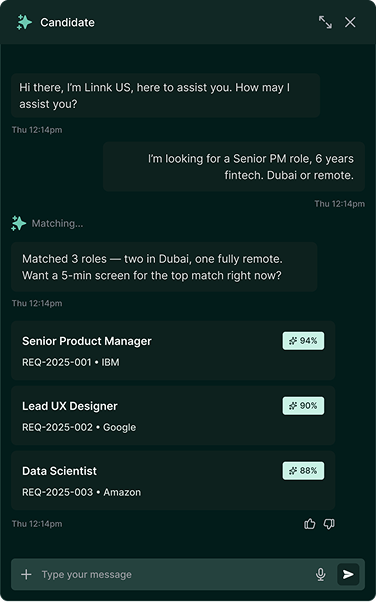

The quality of candidate matching directly affects hiring efficiency and recruiter trust in the platform. A strong AI-native ATS should go beyond keyword matching and understand context, skills adjacency, experience relevance, and role compatibility.

If a candidate has transferable experience or related technical expertise, the system should recognize those strengths even when exact keywords are missing from the resume. Advanced semantic matching and contextual understanding are critical capabilities.

Does the System Reduce Bias or Reinforce It?

AI in hiring can either improve fairness or unintentionally amplify existing bias. This makes ethical AI evaluation one of the most important parts of the buying process.

A responsible AI-native ATS should include safeguards against discriminatory outcomes. Vendors should explain how their models are trained, how bias is monitored, and what fairness controls are in place.

"SaaS AI solutions are inherently scalable, allowing businesses of all sizes to access sophisticated AI tools that were once the preserve of large corporations with deep pockets."